[TOC]

0x00 简述

描述:在学习任何一门新技术总是免不了坑坑拌拌,当您学会了记录坑后然后将其记录当下次遇到,相同问题的时候可以第一时间进行处理;

0x01 配置文件与启动参数

1.Kubelet 启动参数

启动参数总结一览表:

[TOC]

描述:在学习任何一门新技术总是免不了坑坑拌拌,当您学会了记录坑后然后将其记录当下次遇到,相同问题的时候可以第一时间进行处理;

启动参数总结一览表:

[TOC]

描述:在学习任何一门新技术总是免不了坑坑拌拌,当您学会了记录坑后然后将其记录当下次遇到,相同问题的时候可以第一时间进行处理;

启动参数总结一览表:1

--register-node [Boolean] # 节点是否自动注册

/etc/kubernetes/kubelet.conf

关于构建环境

您可以根据自己的情况将构建环境与部署环境分开,例如:

学习时,参考本教程,使用 kubernetes 的 master 节点完成 构建和镜像推送

开发时,在自己的笔记本上完成 构建和镜像推送

工作中,使用 Jenkins Pipeline 或者 gitlab-runner Pipeline 来完成 构建和镜像推送

K8S Containerd 镜像回收GC参数配置

参考地址: https://kubernetes.io/docs/concepts/architecture/garbage-collection/1

2

3

4$ vim /var/lib/kubelet/config.yaml

imageGCHighThresholdPercent: 85

imageGCLowThresholdPercent: 80

maxPods: 180 # pod最大数

Kubelet 相关配置只要修改后,都需进入如下操作1

2systemctl daemon-reload

systemctl restart kubelet.service

描述:APISERVER_NAME 不能是 master 的 hostname,且必须全为小写字母、数字、小数点,不能包含减号export APISERVER_NAME=apiserver.weiyi;

POD_SUBNET 所使用的网段不能与 master节点/worker节点 所在的网段重叠( CIDR 值:无类别域间路由,Classless Inter-Domain Routing),export POD_SUBNET=10.100.0.1/16。

解决办法:1

2

3

4

5

6

7

8

9

10

11

12

13

14

15# 1.如不能下载 kubernetes 的 docker 镜像 ,请替换镜像源以及手工初始化

# --image-repository= mirrorgcrio

# --image-repository=registry.cn-hangzhou.aliyuncs.com/google_containers

~$ kubeadm config images list --image-repository=registry.cn-hangzhou.aliyuncs.com/google_containers

registry.cn-hangzhou.aliyuncs.com/google_containers/kube-apiserver:v1.19.6

registry.cn-hangzhou.aliyuncs.com/google_containers/kube-controller-manager:v1.19.6

registry.cn-hangzhou.aliyuncs.com/google_containers/kube-scheduler:v1.19.6

registry.cn-hangzhou.aliyuncs.com/google_containers/kube-proxy:v1.19.6

registry.cn-hangzhou.aliyuncs.com/google_containers/pause:3.2

registry.cn-hangzhou.aliyuncs.com/google_containers/etcd:3.4.13-0

registry.cn-hangzhou.aliyuncs.com/google_containers/coredns:1.7.0

#2.检查环境变量

echo MASTER_IP=${MASTER_IP} && echo APISERVER_NAME=${APISERVER_NAME} && echo POD_SUBNET=${POD_SUBNET}

Tips : 在重新初始化 master 节点前,请先执行 kubeadm reset -f 操作;

Pending[ImagePullBackoff]异常问题问题描述:

1 | $kubectl get pods calico-node-4vql2 -n kube-system -o wide |

1 | #(1)通过get pods找到pod被调度到了哪一个节点并,确定 Pod 所使用的容器镜像: |

weiyigeek.top-Pending

ContainerCreating、PodInitializing 或 Init:0/3 的状态:1 | #(1)查看该 Pod 的状态 |

/etc/hosts 是否正确设置? 是否有安全组或防火墙的限制?1 | #master节点验证 |

kubeadm token create错误信息:1

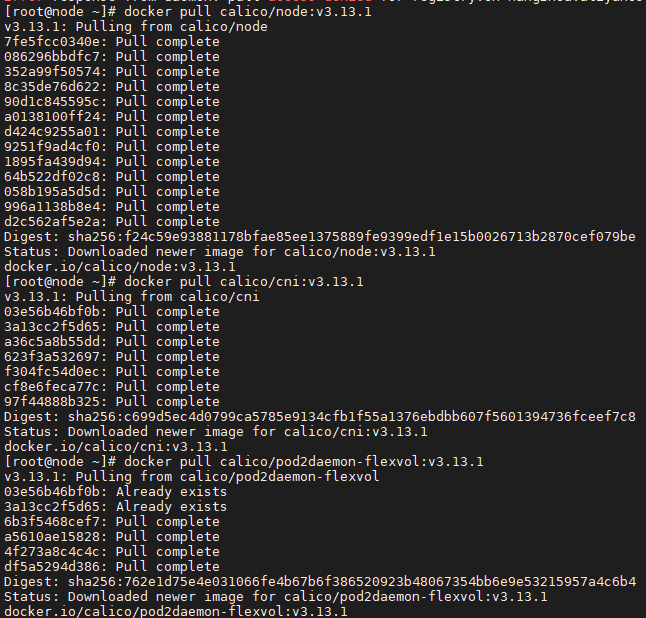

2kubectl apply -f calico-3.13.1.yaml

The connection to the server localhost:8080 was refused - did you specify the right host or port?

错误原因: 由于在初始化之后没将k8s的/etc/kubernetes/admin.conf拷贝到用户的加目录之中/root/.kube/config

解决办法:1

2

3

4

5

6

7

8

9

10

11

12

13# (1) 普通用户对集群访问配置文件设置

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config

# (2) 自动运行设置 KUBECONFIG 环境以及k8s命令自动补齐

grep "export KUBECONFIG" ~/.profile | echo "export KUBECONFIG=$HOME/.kube/config" >> ~/.profile

tee -a ~/.profile <<'EOF'

source <(kubectl completion bash)

source <(kubeadm completion bash)

# source <(helm completion bash)

EOF

source ~/.profile

PS : 如果在加入 k8s 集群时采用普通需要在前面加sudo kubeadm init ...用以提升权限否则将出现[ERROR IsPrivilegedUser]: user is not running as root该错误;

错误信息:1

2

3systemctl status kubelet

6月 23 09:04:02 master-01 kubelet[8085]: E0623 09:04:02.186893 8085 kubelet.go:2187] Container runtime network not ready: NetworkReady=false reason:NetworkPluginNotReady mes...ninitialized

6月 23 09:04:04 master-01 kubelet[8085]: W0623 09:04:04.938700 8085 cni.go:237] Unable to update cni config: no networks found in /etc/cni/net.d

问题原因: 由于master节点初始化安装后报错,在未进行重置的情况下又进行初始化操作或者重置操作不完整导致,还有一种情况是没有安装网络组件比如(flannel 或者 calico);

解决办法: 执行以下命令重置初始化信息,然后在重新初始化;1

2

3

4

5

6

7

8

9

10

11

12

13

14

15systemctl stop kubelet

docker stop $(docker ps -aq)

docker rm -f $(docker ps -aq)

systemctl stop docker

kubeadm reset

rm -rf $HOME/.kube /etc/kubernetes

rm -rf /var/lib/cni/ /etc/cni/ /var/lib/kubelet/*

iptables -F && iptables -t nat -F && iptables -t mangle -F && iptables -X

systemctl start docker

systemctl start kubelet

#安装 calico 网络插件(没有高可用)

rm -f calico-3.13.1.yaml

wget -L https://kuboard.cn/install-script/calico/calico-3.13.1.yaml

kubectl apply -f calico-3.13.1.yaml

kubeadm reset无法进行节点重置,提示retrying of unary invoker failed错误信息:1

2[reset] Removing info for node "master-01" from the ConfigMap "kubeadm-config" in the "kube-system" Namespace

{"level":"warn","ts":"2020-06-23T09:10:30.074+0800","caller":"clientv3/retry_interceptor.go:61","msg":"retrying of unary invoker failed","target":"endpoint://client-174bf993-5731-4b29-9b30-7e958ade79a4/10.10.107.191:2379","attempt":0,"error":"rpc error: code = Unknown desc = etcdserver: re-configuration failed due to not enough started members"}

问题原因: 在重置前etcd容器处于运转之中导致无法进行节点的重置操作;

解决办法: 停止所有的容器以及docker服务然后再执行节点的重置操作1

docker stop $(docker ps -aq) && systemctl stop docker

问题描述:1

2

3

4

5

6kubeadm init --config=kubeadm-config.yaml --upload-certs

[init] Using Kubernetes version: v1.18.4

[preflight] You can also perform this action in beforehand using 'kubeadm config images pull'

error execution phase preflight: [preflight] Some fatal errors occurred:

[ERROR ImagePull]: failed to pull image registry.cn-hangzhou.aliyuncs.com/google_containers/kube-apiserver:v1.18.4: output: Error response from daemon: manifest for registry.cn-hangzhou.aliyuncs.com/google_containers/kube-apiserver:v1.18.4 not found: manifest unknown: manifest unknown

, error: exit status 1

问题原因: 由于k8s.gcr.io官方镜像网站无法下载镜像,而采用的同步镜像源站registry.cn-hangzhou.aliyuncs.com/google_containers/仓库中没有指定k8s版本的依赖组件;

解决办法: 换其它镜像进行尝试或者离线将镜像包导入的docker中(参考前面的笔记2-Kubernetes入门手动安装部署),建议在进行执行上面的命令前先执行kubeadm config images pull --image-repository mirrorgcrio --kubernetes-version=1.18.4查看镜像是否能被拉取;1

2

3

4

5

6

7# 常规k8s.gcr.io镜像站点

# gcr.azk8s.cn/google_containers/ # 已失效

registry.aliyuncs.com/google_containers/

registry.cn-hangzhou.aliyuncs.com/google_containers/

# harbor中k8s.gcr.io的镜像

mirrorgcrio

问题描述:1

2

3PING gateway-example.example.svc.cluster.local (10.105.141.232) 56(84) bytes of data.

From 172.17.76.171 (172.17.76.171) icmp_seq=1 Time to live exceeded

From 172.17.76.171 (172.17.76.171) icmp_seq=2 Time to live exceeded

问题原因:在 Kubernetes 的网络中Service 就是 ping 不通的,因为 Kubernetes 只是为 Service 生成了一个虚拟 IP 地址,实现的方式有三种 User space / Iptables / IPVS 等代理模式;

不管是哪种代理模式Kubernetes Service 的 IP 背后都没有任何实体可以响应「ICMP」全称为 Internet 控制报文协议(Internet Control Message Protocol),但是可以通过curl或者telnet进行访问与

问题解决:1

2

3$ kubectl cluster-info

# Kubernetes master is running at https://k8s.weiyigeek.top:6443

# KubeDNS is running at https://k8s.weiyigeek.top:6443/api/v1/namespaces/kube-system/services/kube-dns:dns/proxy

错误信息:1

2

3

4

5

6

7$ sudo kubeadm init --config=/home/weiyigeek/k8s-init/kubeadm-init-config.yaml --upload-certs | tee kubeadm_init.log

[init] Using Kubernetes version: v1.19.6

[preflight] Running pre-flight checks

error execution phase preflight: [preflight] Some fatal errors occurred:

[ERROR Swap]: running with swap on is not supported. Please disable swap

[preflight] If you know what you are doing, you can make a check non-fatal with `--ignore-preflight-errors=...`

To see the stack trace of this error execute with --v=5 or higher

问题原因: 由于加入节点的机器未禁用swap分区导致1

2

3

4weiyigeek@weiyigeek-107:~$ free

total used free shared buff/cache available

Mem: 8151908 299900 7270588 956 581420 7600492

Swap: 4194300 0 4194300

解决版本: 禁用swap分区1

2

3

4

5sudo swapoff -a && sudo sed -i '/ swap / s/^\(.*\)$/#\1/g' /etc/fstab && free # CentOS

sudo swapoff -a && sudo sed -i 's/^\/swap.img\(.*\)$/#\/swap.img \1/g' /etc/fstab && free # Ubuntu

total used free shared buff/cache available

Mem: 8151908 304428 7260196 956 587284 7595204

Swap: 0 0 0

0/1 nodes are available: 1 node(s) had taint {node.kubernetes.io/not-ready: }, that the pod didn't tolerate.1 | $ kubectl get pod --all-namespaces |

rpc error: code = Unknown desc = [failed to set up sandbox container "355.....4ec7" network for pod "coredns-": networkPlugin cni failed to set up pod "coredns-6c76c8bb89-6xgjl_kube-system"1 | # 资源信息 |

dial tcp 10.96.0.1:443: connect: no route to host报错信息:1

2dial tcp 10.96.0.1:443: i/o timeout

dial tcp 10.96.0.1:443: connect: no route to host

报错原因:

1 |

|

错误信息:1

2

3

4

5

6

7

8sudo kubeadm init

[init] Using Kubernetes version: v1.21.0

[preflight] Running pre-flight checks

[WARNING IsDockerSystemdCheck]: detected "cgroupfs" as the Docker cgroup driver. The recommended driver is "systemd". Please follow the guide at https://kubernetes.io/docs/setup/cri/

error execution phase preflight: [preflight] Some fatal errors occurred:

[ERROR Swap]: running with swap on is not supported. Please disable swap

[preflight] If you know what you are doing, you can make a check non-fatal with `--ignore-preflight-errors=...`

To see the stack trace of this error execute with --v=5 or higher

报错原因: ERROR Swap 是由于当前操作系统未关闭swap交换分区,而WARNING IsDockerSystemdCheck警告则说明cgroup driver未采用systemd。

解决办法:1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22# 1.关闭Swapp交换分区

sudo swapoff -a && sudo sed -i '/ swap / s/^\(.*\)$/#\1/g' /etc/fstab && free # CentOS

sudo swapoff -a && sudo sed -i 's/^\/swap.img\(.*\)$/#\/swap.img \1/g' /etc/fstab && free #Ubuntu

# 2.更改docker的 cgroup driver 驱动为systemd

cat /etc/docker/daemon.json

{

"registry-mirrors": [

"https://registry.cn-hangzhou.aliyuncs.com"

],

"max-concurrent-downloads": 10,

"log-driver": "json-file",

"log-level": "warn",

"log-opts": {

"max-size": "10m",

"max-file": "3"

},

"exec-opts": ["native.cgroupdriver=systemd"],

"storage-driver": "overlay2",

"insecure-registries": ["harbor.weiyigeek", "harbor.weiyi", "harbor.cloud"],

"data-root":"/home/data/docker/"

}

错误信息:1

2

3Error response from daemon: manifest for registry.cn-hangzhou.aliyuncs.com/google_containers/coredns/coredns:v1.8.0 not found: manifest unknown: manifest unknown

Error response from daemon: No such image: registry.cn-hangzhou.aliyuncs.com/google_containers/coredns/coredns:v1.8.0

Error: No such image: registry.cn-hangzhou.aliyuncs.com/google_containers/coredns/coredns:v1.8.0

解决办法: 上docker的hub平台上搜索拉取后然后更改tag即可,地址:https://hub.docker.com/。1

2docker pull coredns/coredns:1.8.0

docker tag coredns/coredns:1.8.0 registry.cn-hangzhou.aliyuncs.com/google_containers/coredns/coredns:v1.8.0

描述: Pending 说明 Pod 还没有调度到某个 Node 上面。可以通过 kubectl describe pod <pod-name> 命令查看到当前 Pod 的事件,进而判断为什么没有调度.

1 | $ kubectl describe pod mypod |

1 | - 资源不足,集群内所有的 Node 都不满足该 Pod 请求的 CPU、内存、GPU 或者临时存储空间等资源。解决方法是删除集群内不用的 Pod 或者增加新的 Node。 |

描述: 首先还是通过 kubectl describe pod

1 | $ kubectl -n kube-system describe pod nginx-pod |

1 | # 可以发现,该 Pod 的 Sandbox 容器无法正常启动,具体原因需要查看 Kubelet 日志: |

1 | $ ip link set cni0 down |

1 | 镜像拉取失败,比如 |

描述: 这通常是镜像名称配置错误或者私有镜像的密钥配置错误导致。这种情况可以使用 docker pull <image> 来验证镜像是否可以正常拉取。

1 | $ kubectl describe pod mypod |

1 | # 1. 如果是私有镜像,需要首先创建一个 docker-registry 类型的 Secret |

描述: CrashLoopBackOff 状态说明容器曾经启动了,但又异常退出了。此时 Pod 的 RestartCounts 通常是大于 0 的,可以先查看一下容器的日志

1 | * 容器进程退出 |

通常处于 Error 状态说明 Pod 启动过程中发生了错误。常见的原因包括

依赖的 ConfigMap、Secret 或者 PV 等不存在

请求的资源超过了管理员设置的限制,比如超过了 LimitRange 等

违反集群的安全策略,比如违反了 PodSecurityPolicy 等

容器无权操作集群内的资源,比如开启 RBAC 后,需要为 ServiceAccount 配置角色绑定

从 v1.5 开始,Kubernetes 不会因为 Node 失联而删除其上正在运行的 Pod,而是将其标记为 Terminating 或 Unknown 状态。想要删除这些状态的 Pod 有三种方法:

从集群中删除该 Node。使用公有云时,kube-controller-manager 会在 VM 删除后自动删除对应的 Node。而在物理机部署的集群中,需要管理员手动删除 Node(如 kubectl delete node <node-name>。

Node 恢复正常。Kubelet 会重新跟 kube-apiserver 通信确认这些 Pod 的期待状态,进而再决定删除或者继续运行这些 Pod。

用户强制删除。用户可以执行 kubectl delete pods <pod> --grace-period=0 --force 强制删除 Pod。除非明确知道 Pod 的确处于停止状态(比如 Node 所在 VM 或物理机已经关机),否则不建议使用该方法。特别是 StatefulSet 管理的 Pod,强制删除容易导致脑裂或者数据丢失等问题。

如果 Kubelet 是以 Docker 容器的形式运行的,此时 kubelet 日志中可能会发现如下的错误:

1

2{"log":"E0926 19:59:39.977461 54420 nestedpendingoperations.go:262] Operation for \"\\\"kubernetes.io/secret/30f3ffec-a29f-11e7-b693-246e9607517c-default-token-6tpnm\\\" (\\\"30f3ffec-a29f-11e7-b693-246e9607517c\\\")\" failed. No retries permitted until 2017-09-26 19:59:41.977419403 +0800 CST (durationBeforeRetry 2s). Error: UnmountVolume.TearDown failed for volume \"default-token-6tpnm\" (UniqueName: \"kubernetes.io/secret/30f3ffec-a29f-11e7-b693-246e9607517c-default-token-6tpnm\") pod \"30f3ffec-a29f-11e7-b693-246e9607517c\" (UID: \"30f3ffec-a29f-11e7-b693-246e9607517c\") : remove /var/lib/kubelet/pods/30f3ffec-a29f-11e7-b693-246e9607517c/volumes/kubernetes.io~secret/default-token-6tpnm: device or resource busy\n","stream":"stderr","time":"2017-09-26T11:59:39.977728079Z"}

{"log":"E0926 19:59:39.977461 54420 nestedpendingoperations.go:262] Operation for \"\\\"kubernetes.io/secret/30f3ffec-a29f-11e7-b693-246e9607517c-default-token-6tpnm\\\" (\\\"30f3ffec-a29f-11e7-b693-246e9607517c\\\")\" failed. No retries permitted until 2017-09-26 19:59:41.977419403 +0800 CST (durationBeforeRetry 2s). Error: UnmountVolume.TearDown failed for volume \"default-token-6tpnm\" (UniqueName: \"kubernetes.io/secret/30f3ffec-a29f-11e7-b693-246e9607517c-default-token-6tpnm\") pod \"30f3ffec-a29f-11e7-b693-246e9607517c\" (UID: \"30f3ffec-a29f-11e7-b693-246e9607517c\") : remove /var/lib/kubelet/pods/30f3ffec-a29f-11e7-b693-246e9607517c/volumes/kubernetes.io~secret/default-token-6tpnm: device or resource busy\n","stream":"stderr","time":"2017-09-26T11:59:39.977728079Z"}

如果是这种情况,则需要给 kubelet 容器设置 –containerized 参数并传入以下的存储卷1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16# 以使用 calico 网络插件为例

-v /:/rootfs:ro,shared \

-v /sys:/sys:ro \

-v /dev:/dev:rw \

-v /var/log:/var/log:rw \

-v /run/calico/:/run/calico/:rw \

-v /run/docker/:/run/docker/:rw \

-v /run/docker.sock:/run/docker.sock:rw \

-v /usr/lib/os-release:/etc/os-release \

-v /usr/share/ca-certificates/:/etc/ssl/certs \

-v /var/lib/docker/:/var/lib/docker:rw,shared \

-v /var/lib/kubelet/:/var/lib/kubelet:rw,shared \

-v /etc/kubernetes/ssl/:/etc/kubernetes/ssl/ \

-v /etc/kubernetes/config/:/etc/kubernetes/config/ \

-v /etc/cni/net.d/:/etc/cni/net.d/ \

-v /opt/cni/bin/:/opt/cni/bin/ \

处于 Terminating 状态的 Pod 在 Kubelet 恢复正常运行后一般会自动删除。但有时也会出现无法删除的情况,并且通过 kubectl delete pods 1

2

3"finalizers": [

"foregroundDeletion"

]

Kubelet 使用 inotify 机制检测 /etc/kubernetes/manifests 目录(可通过 Kubelet 的 –pod-manifest-path 选项指定)中静态 Pod 的变化,并在文件发生变化后重新创建相应的 Pod。但有时也会发生修改静态 Pod 的 Manifest 后未自动创建新 Pod 的情景,此时一个简单的修复方法是重启 Kubelet。

Namespace 一直处于 terminating 状态,一般有两种原因:

Namespace 中还有资源正在删除中

Namespace 的 Finalizer 未正常清理

对第一个问题,可以执行下面的命令来查询所有的资源

kubectl api-resources –verbs=list –namespaced -o name | xargs -n 1 kubectl get –show-kind –ignore-not-found -n $NAMESPACE

而第二个问题则需要手动清理 Namespace 的 Finalizer 列表:

kubectl get namespaces $NAMESPACE -o json | jq ‘.spec.finalizers=[]’ > /tmp/ns.json

kubectl proxy &

curl -k -H “Content-Type: application/json” -X PUT –data-binary @/tmp/ns.json http://127.0.0.1:8001/api/v1/namespaces/$NAMESPACE/finalize

1 | Warning Failed 2m19s (x4 over 3m4s) kubelet Error: failed to create containerd task: OCI runtime create failed: container_linux.go:380: starting container process caused: process_linux.go:545: container init caused: process_linux.go:508: setting cgroup config for procHooks process caused: failed to write "107374182400000": write /sys/fs/cgroup/cpu,cpuacct/system.slice/containerd.service/kubepods-burstable-pod6e586bca_1fd9_412a_892c_a77b38d7f3ec.slice:cri-containerd:app/cpu.cfs_quota_us: invalid argument: unknown |

1 | ~/K8s/Day8/demo7$ kubectl get pod |

kubectl describe命令查看错误提示信息 waiting for a volume to be created, either by external provisioner “fuseim.pri/ifs” or manually created by system administrator。其次是通过kubectl logs 命令查看 nfs-client-provisioner pod日志中有unexpected error getting claim reference: selfLink was empty, can’t make reference 提示。- --feature-gates=RemoveSelfLink=false或者在其systemd单元服务中加入此参数然后重启即可。1 | /etc/kubernetes/manifests/kube-apiserver.yaml |

1 | E0704 15:20:03.875017 7912 kubelet.go:1292] Image garbage collection failed once. Stats initialization may not have completed yet: failed to get imageFs info: unable to find data in memory cache |

cgroupdriver使用了systemd 因此我们需要升级systemd.1 | yum update -y |

在k8s集群中加入额外主机报failure loading certificate for CA: couldn‘t load the certificate file错误。

错误信息: 当k8s做集群高可用的时候,需要将另一个master加入到当前master出现了如下错误。1

failure loading certificate for CA: couldn't load the certificate file /etc/kubernetes/pki/ca.crt: open /etc/kubernetes/pki/ca.crt: no such file or directory

问题原因: 由于新的节点上没有kubernetes集群上的pki目录中的ca证书。

解决办法:1

2

3

4

5scp -rp /etc/kubernetes/pki/ca.* master02:/etc/kubernetes/pki

scp -rp /etc/kubernetes/pki/sa.* master02:/etc/kubernetes/pki

scp -rp /etc/kubernetes/pki/front-proxy-ca.* master02:/etc/kubernetes/pki

scp -rp /etc/kubernetes/pki/etcd/ca.* master02:/etc/kubernetes/pki/etcd

scp -rp /etc/kubernetes/admin.conf master02:/etc/kubernetes

你好看友,欢迎关注博主微信公众号哟! ❤

这将是我持续更新文章的动力源泉,谢谢支持!(๑′ᴗ‵๑)

温馨提示: 未解锁的用户不能粘贴复制文章内容哟!

方式1.请访问本博主的B站【WeiyiGeek】首页关注UP主,

将自动随机获取解锁验证码。

Method 2.Please visit 【My Twitter】. There is an article verification code in the homepage.

方式3.扫一扫下方二维码,关注本站官方公众号

回复:验证码

将获取解锁(有效期7天)本站所有技术文章哟!

@WeiyiGeek - 为了能到远方,脚下的每一步都不能少

欢迎各位志同道合的朋友一起学习交流,如文章有误请在下方留下您宝贵的经验知识,个人邮箱地址【master#weiyigeek.top】或者个人公众号【WeiyiGeek】联系我。

更多文章来源于【WeiyiGeek Blog - 为了能到远方,脚下的每一步都不能少】, 个人首页地址( https://weiyigeek.top )

专栏书写不易,如果您觉得这个专栏还不错的,请给这篇专栏 【点个赞、投个币、收个藏、关个注、转个发、赞个助】,这将对我的肯定,我将持续整理发布更多优质原创文章!。

最后更新时间:

文章原始路径:_posts/虚拟云容/云容器/Kubernetes/n0-Kubernetes配置解析与入坑解决FAQ记录.md

转载注明出处,原文地址:https://blog.weiyigeek.top/2020/5-2-472.html

本站文章内容遵循 知识共享 署名 - 非商业性 - 相同方式共享 4.0 国际协议