[TOC]

0x00 前言简述 描述:在 Kubernetes 集群中所有操作的资源数据都是存储在 etcd 数据库上, 所以防止集群节点瘫痪未正常工作或在集群迁移时,以及在出现异常的情况下能尽快的恢复集群数据,则我们需要定期针对etcd集群数据进行相应的容灾操作。

在K8S集群中或者Docker环境中,我们可以非常方便的针对 etcd 数据进行备份,通我们常在一个节点上对 etcd 做快照就能够实现etcd数据的备份,其快照文件包含所有 Kubernetes 状态和关键信息, 有了etcd集群数据备份后,例如在灾难场景(例如丢失所有控制平面节点)下也能快速恢复 Kubernetes 集群,Boss再也不同担心系统起不来呢。

首发地址: https://mp.weixin.qq.com/s/eblhCVjEFdvw7B-tlNTi-w

0x01 环境准备 1.安装的二进制 etcdctl 描述: etcdctl 二进制文件可以在 github.com/coreos/etcd/releases 选择对应的版本下载,例如可以执行以下 install_etcdctl.sh 的脚本,修改其中的版本信息。

install_etcdctl.sh

[TOC]

0x00 前言简述 描述:在 Kubernetes 集群中所有操作的资源数据都是存储在 etcd 数据库上, 所以防止集群节点瘫痪未正常工作或在集群迁移时,以及在出现异常的情况下能尽快的恢复集群数据,则我们需要定期针对etcd集群数据进行相应的容灾操作。

在K8S集群中或者Docker环境中,我们可以非常方便的针对 etcd 数据进行备份,通我们常在一个节点上对 etcd 做快照就能够实现etcd数据的备份,其快照文件包含所有 Kubernetes 状态和关键信息, 有了etcd集群数据备份后,例如在灾难场景(例如丢失所有控制平面节点)下也能快速恢复 Kubernetes 集群,Boss再也不同担心系统起不来呢。

首发地址: https://mp.weixin.qq.com/s/eblhCVjEFdvw7B-tlNTi-w

0x01 环境准备 1.安装的二进制 etcdctl 描述: etcdctl 二进制文件可以在 github.com/coreos/etcd/releases 选择对应的版本下载,例如可以执行以下 install_etcdctl.sh 的脚本,修改其中的版本信息。

install_etcdctl.sh1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 #!/bin/bash ETCD_VER=v3.5.5 ETCD_DIR=etcd-download DOWNLOAD_URL=https://github.com/coreos/etcd/releases/download mkdir ${ETCD_DIR} cd ${ETCD_DIR} wget ${DOWNLOAD_URL} /${ETCD_VER} /etcd-${ETCD_VER} -linux-amd64.tar.gz tar -xzvf etcd-${ETCD_VER} -linux-amd64.tar.gz cd etcd-${ETCD_VER} -linux-amd64cp etcdctl /usr/local /bin/

验证安装:

1 2 3 $ etcdctl version etcdctl version: 3.5.5 API version: 3.5

2.拉取带有 etcdctl 的 Docker 镜像 操作流程:1 2 3 4 5 6 7 docker run --rm \ -v /data/backup:/backup \ -v /etc/kubernetes/pki/etcd:/etc/kubernetes/pki/etcd \ --env ETCDCTL_API=3 \ registry.cn-hangzhou.aliyuncs.com/google_containers/etcd:3.5.1-0 \ /bin/sh -c "etcdctl version"

安装验证:

1 2 3 4 5 6 7 8 3.5.1-0: Pulling from google_containers/etcd e8614d09b7be: Pull complete 45b6afb4a92f: Pull complete ....... Digest: sha256:64b9ea357325d5db9f8a723dcf503b5a449177b17ac87d69481e126bb724c263 Status: Downloaded newer image for registry.cn-hangzhou.aliyuncs.com/google_containers/etcd:3.5.1-0 etcdctl version: 3.5.1 API version: 3.5

3.Kubernetes 使用 etcdctl 镜像创建Pod 1 2 3 4 5 6 7 8 9 crictl pull registry.cn-hangzhou.aliyuncs.com/google_containers/etcd:3.5.5-0 $ kubectl run etcdctl --image=registry.cn-hangzhou.aliyuncs.com/google_containers/etcd:3.5.5-0 --command -- /usr/local /bin/etcdctl version $ kubectl logs -f etcdctl etcdctl version: 3.5.5 API version: 3.5 $ kubectl delete pod etcdctl

0x02 备份实践 1.使用二进制安装 etcdctl 客户端工具 温馨提示: 如果是单节点 Kubernetes 我们只需要对其的 etcd 数据库进行快照备份, 如果是多主多从的集群,我们则需依次备份多个 master 节点中 etcd,防止在备份时etc数据被更改!

此处实践环境为多master高可用集群节点, 即三主节点、四从工作节点,若你对K8s集群不了解或者项搭建高可用集群的朋友,关注 WeiyiGeek 公众号回复【Kubernetes学习之路汇总】即可获得学习资料:https://www.weiyigeek.top/wechat.html?key=Kubernetes学习之路汇总

1 2 3 4 5 6 7 8 9 $ kubectl get node NAME STATUS ROLES AGE VERSION weiyigeek-107 Ready control-plane,master 279d v1.23.1 weiyigeek-108 Ready control-plane,master 278d v1.23.1 weiyigeek-109 Ready control-plane,master 278d v1.23.1 weiyigeek-223 Ready work 278d v1.23.1 weiyigeek-224 Ready work 278d v1.23.1 weiyigeek-225 Ready work 279d v1.23.1 weiyigeek-226 Ready work 118d v1.23.1

操作流程: 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 $ mkdir -pv /backup $ ls /etc/kubernetes/pki/etcd/ ca.crt ca.key healthcheck-client.crt healthcheck-client.key peer.crt peer.key server.crt server.key $ kubectl get pod -n kube-system -o wide | grep "etcd" etcd-weiyigeek-107 1/1 Running 1 279d 192.168.12.107 weiyigeek-107 <none><none> etcd-weiyigeek-108 1/1 Running 0 278d 192.168.12.108 weiyigeek-108 <none><none> etcd-weiyigeek-109 1/1 Running 0 278d 192.168.12.109 weiyigeek-109 <none><none> $ netstat -ano | grep "107:2379" tcp 0 0 192.168.12.107:2379 0.0.0.0:* LISTEN off (0.00/0/0) $ netstat -ano | grep "108:2379" tcp 0 0 192.168.12.108:2379 0.0.0.0:* LISTEN off (0.00/0/0) $ netstat -ano | grep "109:2379" tcp 0 0 192.168.12.109:2379 0.0.0.0:* LISTEN off (0.00/0/0) $ etcdctl --endpoints=https://10.20.176.212:2379 \ --cacert=/etc/kubernetes/pki/etcd/ca.crt \ --cert=/etc/kubernetes/pki/etcd/healthcheck-client.crt \ --key=/etc/kubernetes/pki/etcd/healthcheck-client.key \ snapshot save /backup/etcd-snapshot.db {"level" :"info" ,"ts" :"2022-10-23T16:32:26.020+0800" ,"caller" :"snapshot/v3_snapshot.go:65" ,"msg" :"created temporary db file" ,"path" :"/backup/etcd-snapshot.db.part" } {"level" :"info" ,"ts" :"2022-10-23T16:32:26.034+0800" ,"logger" :"client" ,"caller" :"v3/maintenance.go:211" ,"msg" :"opened snapshot stream; downloading" } {"level" :"info" ,"ts" :"2022-10-23T16:32:26.034+0800" ,"caller" :"snapshot/v3_snapshot.go:73" ,"msg" :"fetching snapshot" ,"endpoint" :"https://10.20.176.212:2379" } {"level" :"info" ,"ts" :"2022-10-23T16:32:26.871+0800" ,"logger" :"client" ,"caller" :"v3/maintenance.go:219" ,"msg" :"completed snapshot read; closing" } {"level" :"info" ,"ts" :"2022-10-23T16:32:26.946+0800" ,"caller" :"snapshot/v3_snapshot.go:88" ,"msg" :"fetched snapshot" ,"endpoint" :"https://10.20.176.212:2379" ,"size" :"112 MB" ,"took" :"now" } {"level" :"info" ,"ts" :"2022-10-23T16:32:26.946+0800" ,"caller" :"snapshot/v3_snapshot.go:97" ,"msg" :"saved" ,"path" :"/backup/etcd-snapshot.db" } Snapshot saved at /backup/etcd-snapshot.db

通过etcdctl查询Kubernetes中etcd数据,由于Kubernetes使用etcd v3版本的API,而且etcd 集群中默认使用tls认证,所以先配置几个环境变量。1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 78 79 80 81 82 83 84 85 86 87 export ETCDCTL_API=3export ETCDCTL_CACERT=/etc/kubernetes/pki/etcd/ca.crtexport ETCDCTL_CERT=/etc/kubernetes/pki/etcd/peer.crtexport ETCDCTL_KEY=/etc/kubernetes/pki/etcd/peer.keyetcdctl --endpoints=https://10.20.176.212:2379 get /registry --prefix --keys-only=true | head -n 1 /registry/apiextensions.k8s.io/customresourcedefinitions/bgpconfigurations.crd.projectcalico.org etcdctl --endpoints=https://10.20.176.212:2379 get /registry/namespaces/default --prefix --keys-only=false -w=json | python3 -m json.tool etcdctl --endpoints=https://10.20.176.212:2379 get /registry/namespaces/default --prefix --keys-only=false -w=json | python3 -m json.tool { "header" : { "cluster_id" : 11404821125176160774, "member_id" : 7099450421952911102, "revision" : 30240109, "raft_term" : 3 }, "kvs" : [ { "key" : "L3JlZ2lzdHJ5L25hbWVzcGFjZXMvZGVmYXVsdA==" , "create_revision" : 192, "mod_revision" : 192, "version" : 1, "value" : "azhzAAoPCg.......XNwYWNlEGgAiAA==" } ], "count" : 1 } echo -e "L3JlZ2lzdHJ5L25hbWVzcGFjZXMvZGVmYXVsdA==" | base64 -decho -e "azhzAAoPCgJ2MRIJTmFtZXNwYWNlEogCCu0BCgdkZWZhdWx0EgAaACIAKiQ5ZDQyYmYxMy03OGM0LTQ4NzQtOThiYy05NjNlMDg1MDYyZjYyADgAQggIo8yllQYQAFomChtrdWJlcm5ldGVzLmlvL21ldGFkYXRhLm5hbWUSB2RlZmF1bHR6AIoBfQoOa3ViZS1hcGlzZXJ2ZXISBlVwZGF0ZRoCdjEiCAijzKWVBhAAMghGaWVsZHNWMTpJCkd7ImY6bWV0YWRhdGEiOnsiZjpsYWJlbHMiOnsiLiI6e30sImY6a3ViZXJuZXRlcy5pby9tZXRhZGF0YS5uYW1lIjp7fX19fUIAEgwKCmt1YmVybmV0ZXMaCAoGQWN0aXZlGgAiAA==" | base64 -d$ kubectl get ns default -o yaml apiVersion: v1 kind: Namespace metadata: creationTimestamp: "2022-06-15T04:54:59Z" labels: kubernetes.io/metadata.name: default name: default resourceVersion: "192" uid: 9d42bf13-78c4-4874-98bc-963e085062f6 spec: finalizers: - kubernetes status: phase: Active etcdctl --endpoints=https://10.20.176.212:2379 get /registry/pods/default --prefix --keys-only=true etcdctl --endpoints=https://10.20.176.212:2379 get /registry/pods/default --prefix --keys-only=true -w=json | python3 -m json.tool { "header" : { "cluster_id" : 11404821125176160774, "member_id" : 7099450421952911102, "revision" : 30442415, "raft_term" : 3 }, "kvs" : [ { "key" : "L3JlZ2lzdHJ5L3BvZHMvZGVmYXVsdC9uZnMtZGV2LW5mcy1zdWJkaXItZXh0ZXJuYWwtcHJvdmlzaW9uZXItY2Y3Njg0ZjhiLWZ6bDlo" , "create_revision" : 5510865, "mod_revision" : 5510883, "version" : 5 }, { "key" : "L3JlZ2lzdHJ5L3BvZHMvZGVmYXVsdC9uZnMtbG9jYWwtbmZzLXN1YmRpci1leHRlcm5hbC1wcm92aXNpb25lci02Zjk3ZDQ0YmI4LTQyNHRr" , "create_revision" : 5510967, "mod_revision" : 5510987, "version" : 5 } ], "count" : 2 }

2.使用Docker镜像安装 etcdctl 客户端工具 描述: 在装有Docker环境的机器,我们可以非常方便的备份K8s集群中的etcd数据库,此处我已经安装好了Docker,若有不了解Docker或者需要搭建Docker环境中童鞋。

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 $ docker version Client: Docker Engine - Community Version 20.10.3 ....... Server: Docker Engine - Community Engine: Version: 20.10.3 $ mkdir -vp /backup $ docker run --rm \ -v /backup:/backup \ -v /etc/kubernetes/pki/etcd:/etc/kubernetes/pki/etcd \ --env ETCDCTL_API=3 \ registry.cn-hangzhou.aliyuncs.com/google_containers/etcd:3.5.1-0 \ /bin/sh -c "etcdctl --endpoints=https://192.168.12.107:2379 \ --cacert=/etc/kubernetes/pki/etcd/ca.crt \ --key=/etc/kubernetes/pki/etcd/healthcheck-client.key \ --cert=/etc/kubernetes/pki/etcd/healthcheck-client.crt \ snapshot save /backup/etcd-snapshot.db" Snapshot saved at /backup/etcd-snapshot.db ls -alh /backup/etcd-snapshot.db -rw------- 1 root root 8.6M Oct 23 16:58 /backup/etcd-snapshot.db

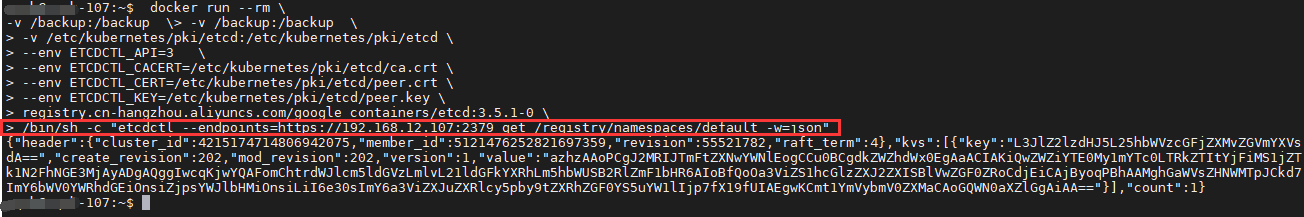

使用 Docker 容器查看 k8s 集群中的etcd数据库中的数据。1 2 3 4 5 6 7 8 9 $ docker run --rm \ -v /backup:/backup \ -v /etc/kubernetes/pki/etcd:/etc/kubernetes/pki/etcd \ --env ETCDCTL_API=3 \ --env ETCDCTL_CACERT=/etc/kubernetes/pki/etcd/ca.crt \ --env ETCDCTL_CERT=/etc/kubernetes/pki/etcd/peer.crt \ --env ETCDCTL_KEY=/etc/kubernetes/pki/etcd/peer.key \ registry.cn-hangzhou.aliyuncs.com/google_containers/etcd:3.5.1-0 \ /bin/sh -c "etcdctl --endpoints=https://192.168.12.107:2379 get /registry/namespaces/default -w=json"

执行结果:

3.在kubernetes集群中快速创建pod进行手动备份 准备一个Pod资源清单并部署1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 cat <<EOF | kubectl apply -f - apiVersion: v1 kind: Pod metadata: name: etcd-backup labels: tool: backup spec: containers: - name: redis image: registry.cn-hangzhou.aliyuncs.com/google_containers/etcd:3.5.5-0 imagePullPolicy: IfNotPresent command: - sh - -c - "etcd" env: - name: ETCDCTL_API value: "3" - name: ETCDCTL_CACERT value: "/etc/kubernetes/pki/etcd/ca.crt" - name: ETCDCTL_CERT value: "/etc/kubernetes/pki/etcd/healthcheck-client.crt" - name: ETCDCTL_KEY value: "/etc/kubernetes/pki/etcd/healthcheck-client.key" volumeMounts: - name: "pki" mountPath: "/etc/kubernetes" - name: "backup" mountPath: "/backup" volumes: - name: pki hostPath: path: "/etc/kubernetes" type: "DirectoryOrCreate" - name: "backup" hostPath: path: "/storage/dev/backup" type: "DirectoryOrCreate" restartPolicy: Never nodeSelector: node-role.kubernetes.io/master: "" EOF pod/etcd-backup created

进入到该Pod终端之中执行相应的备份命令

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 ~$ kubectl exec -it etcd-backup sh sh-5.1 sh-5.1 sh-5.1 Snapshot saved at /backup/etcd-108-32616-snapshot.db sh-5.1 sh-5.1 2db31a5d67ec1034, started, weiyigeek-108, https://192.168.12.108:2380, https://192.168.12.108:2379, false 42efe7cca897d765, started, weiyigeek-109, https://192.168.12.109:2380, https://192.168.12.109:2379, false 471323846709334f, started, weiyigeek-107, https://192.168.12.107:2380, https://192.168.12.107:2379, false sh-5.1 https://192.168.12.107:2379 is healthy: successfully committed proposal: took = 11.930331ms https://192.168.12.109:2379 is healthy: successfully committed proposal: took = 11.930993ms https://192.168.12.108:2379 is healthy: successfully committed proposal: took = 109.515933ms sh-5.1 https://192.168.12.107:2379, 471323846709334f, 3.5.1, 9.2 MB, false , false , 4, 71464830, 71464830, https://192.168.12.108:2379, 2db31a5d67ec1034, 3.5.1, 9.2 MB, false , false , 4, 71464830, 71464830, https://192.168.12.109:2379, 42efe7cca897d765, 3.5.1, 9.2 MB, true , false , 4, 71464830, 71464830,

至此,手动备份etcd集群数据快照完毕!

4.在kubernetes集群中使用CronJob资源控制器进行定时备份 首先准备一个cronJob资源清单:1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 cat > etcd-database-backup.yaml <<'EOF' apiVersion: batch/v1 kind: CronJob metadata: name: etcd-database-backup annotations: descript: "etcd数据库定时备份" spec: schedule: "*/5 * * * *" jobTemplate: spec: template: spec: containers: - name: etcdctl image: registry.cn-hangzhou.aliyuncs.com/google_containers/etcd:3.5.5-0 env: - name: ETCDCTL_API value: "3" - name: ETCDCTL_CACERT value: "/etc/kubernetes/pki/etcd/ca.crt" - name: ETCDCTL_CERT value: "/etc/kubernetes/pki/etcd/healthcheck-client.crt" - name: ETCDCTL_KEY value: "/etc/kubernetes/pki/etcd/healthcheck-client.key" command : - /bin/sh - -c - | export RAND=$RANDOM etcdctl --endpoints=https://192.168.12.107:2379 snapshot save /backup/etcd-107-${RAND} -snapshot.db etcdctl --endpoints=https://192.168.12.108:2379 snapshot save /backup/etcd-108-${RAND} -snapshot.db etcdctl --endpoints=https://192.168.12.109:2379 snapshot save /backup/etcd-109-${RAND} -snapshot.db volumeMounts: - name: "pki" mountPath: "/etc/kubernetes" - name: "backup" mountPath: "/backup" imagePullPolicy: IfNotPresent volumes: - name: "pki" hostPath: path: "/etc/kubernetes" type : "DirectoryOrCreate" - name: "backup" hostPath: path: "/storage/dev/backup" type : "DirectoryOrCreate" nodeSelector: node-role.kubernetes.io/master: "" restartPolicy: Never EOF

创建cronjob资源清单:1 2 kubectl apply -f etcd-database-backup.yaml

查看创建的cronjob资源及其集群etcd备份:1 2 3 4 5 NAME SCHEDULE SUSPEND ACTIVE LAST SCHEDULE AGE cronjob.batch/etcd-database-backup */5 * * * * False 0 21s 14m NAME READY STATUS RESTARTS AGE pod/etcd-database-backup-27776740-rhzkk 0/1 Completed 0 21s

查看定时Pod日志以及备份文件:1 2 3 4 5 6 7 8 9 10 11 kubectl logs -f pod/etcd-database-backup-27776740-rhzkk Snapshot saved at /backup/etcd-107-25615-snapshot.db Snapshot saved at /backup/etcd-108-25615-snapshot.db Snapshot saved at /backup/etcd-109-25615-snapshot.db $ ls -lt total 25M -rw------- 1 root root 8.6M Oct 24 21:12 etcd-107-25615-snapshot.db -rw------- 1 root root 7.1M Oct 24 21:12 etcd-108-25615-snapshot.db -rw------- 1 root root 8.8M Oct 24 21:12 etcd-109-25615-snapshot.db

至此集群中的etcd快照数据备份完毕!

0x02 恢复实践 1.单master节点恢复 描述: 当单master集群节点资源清单数据丢失时,我们可采用如下方式进行快速恢复数据。

操作流程:

温馨提示: 如果是单节点的k8S集群则使用如下命令恢复1 2 3 4 mv /etc/kubernetes/manifests/ /etc/kubernetes/manifests-backup/ mv /var/lib/etcd /var/lib/etcd.bak ETCDCTL_API=3 etcdctl snapshot restore /backup/etcd-212-32616-snapshot.db --data-dir=/var/lib/etcd/ --endpoints=https://10.20.176.212:2379 --cacert=/etc/kubernetes/pki/etcd/ca.crt --cert=/etc/kubernetes/pki/etcd/peer.crt --key=/etc/kubernetes/pki/etcd/peer.key

2.多master节点恢复 1.温馨提示,此处的集群etcd数据库是安装在Kubernetes集群之中的,并非外部独立安装部署的。1 2 3 4 5 $ kubectl get pod -n kube-system etcd-devtest-master-212 etcd-devtest-master-213 etcd-devtest-master-214 NAME READY STATUS RESTARTS AGE etcd-devtest-master-212 1/1 Running 3 134d etcd-devtest-master-213 1/1 Running 0 134d etcd-devtest-master-214 1/1 Running 0 134d

2.前面我们提到过,在进行恢复前需要查看 etcd 集群当前成员以及监控状态。1 2 3 4 5 6 7 8 9 10 11 12 13 14 ETCDCTL_API=3 etcdctl member list --endpoints=https://10.20.176.212:2379 --cacert=/etc/kubernetes/pki/etcd/ca.crt --cert=/etc/kubernetes/pki/etcd/peer.crt --key=/etc/kubernetes/pki/etcd/peer.key 6286508b550016fe, started, devtest-master-212, https://10.20.176.212:2380, https://10.20.176.212:2379, false 9dd15f852caf8e05, started, devtest-master-214, https://10.20.176.214:2380, https://10.20.176.214:2379, false e0f23bd90b7c7c0d, started, devtest-master-213, https://10.20.176.213:2380, https://10.20.176.213:2379, false ETCDCTL_API=3 etcdctl endpoint status --endpoints=https://10.20.176.212:2379 --endpoints=https://10.20.176.213:2379 --endpoints=https://10.20.176.214:2379 --cacert=/etc/kubernetes/pki/etcd/ca.crt --cert=/etc/kubernetes/pki/etcd/peer.crt --key=/etc/kubernetes/pki/etcd/peer.key --write-out table ETCDCTL_API=3 etcdctl endpoint health --endpoints=https://10.20.176.212:2379 --endpoints=https://10.20.176.213:2379 --endpoints=https://10.20.176.214:2379 --cacert=/etc/kubernetes/pki/etcd/ca.crt --cert=/etc/kubernetes/pki/etcd/peer.crt --key=/etc/kubernetes/pki/etcd/peer.key https://10.20.176.212:2379 is healthy: successfully committed proposal: took = 14.686875ms https://10.20.176.214:2379 is healthy: successfully committed proposal: took = 16.201187ms https://10.20.176.213:2379 is healthy: successfully committed proposal: took = 18.962462ms

3.停掉所有Master机器的kube-apiserver和etcd ,然后在利用备份进行恢复该节点的etcd数据。

1 2 3 4 5 6 7 8 mv /etc/kubernetes/manifests/ /etc/kubernetes/manifests-backup/ mv /var/lib/etcd /var/lib/etcd.bak ETCDCTL_API=3 etcdctl snapshot restore /backup/etcd-212-32616-snapshot.db --data-dir=/var/lib/etcd --name=devtest-master-212 --cacert=/etc/kubernetes/pki/etcd/ca.crt --cert=/etc/kubernetes/pki/etcd/peer.crt --key=/etc/kubernetes/pki/etcd/peer.key --initial-cluster-token=etcd-cluster-0 --initial-cluster=devtest-master-212=https://10.20.176.212:2380,devtest-master-213=https://10.20.176.213:2380,devtest-master-214=https://10.20.176.214:2380 --initial-advertise-peer-urls=https://10.20.176.212:2380

温馨提示: 当节点加入控制平面 control-plane 后为 API Server、Controller Manager 和 Scheduler 生成静态Pod配置清单,主机上的kubelet服务会监视 /etc/kubernetes/manifests目录中的配置清单的创建、变动和删除等状态变动,并根据变动完成Pod创建、更新或删除操作。因此,这两个阶段创建生成的各配置清单将会启动Master组件的相关Pod

4.然后启动 etcd 和 apiserver 并查看 pods是否恢复正常。

1 2 3 4 5 6 7 8 9 10 $ kubectl get pod -n kube-system -l component=etcd $ kubectl get componentstatuses Warning: v1 ComponentStatus is deprecated in v1.19+ NAME STATUS MESSAGE ERROR scheduler Healthy ok controller-manager Healthy ok etcd-0 Healthy {"health" :"true" ,"reason" :"" }

3.K8S集群中etcd数据库节点数据不一致问题解决实践 描述: 在日常巡检业务时,发现etcd数据不一致,执行kubectl get pod -n xxx获取的信息资源不一样, etcdctl 直接查询了 etcd 集群状态和集群数据,返回结果显示 3 个节点状态都正常,且 RaftIndex 一致,观察 etcd 的日志也并未发现报错信息,唯一可疑的地方是 3 个节点的 dbsize 差别较大,得出的结论是 etcd 数据不一致。

1 2 3 4 5 6 7 8 $ ETCDCTL_API=3 etcdctl endpoint status --endpoints=https://192.168.12.108:2379 --endpoints=https://192.168.12.107:2379 --endpoints=https://192.168.12.109:2379 --cacert=/etc/kubernetes/pki/etcd/ca.crt --cert=/etc/kubernetes/pki/etcd/peer.crt --key=/etc/kubernetes/pki/etcd/peer.key --write-out table +-----------------------------+------------------+---------+---------+-----------+-----------+------------+ | ENDPOINT | ID | VERSION | DB SIZE | IS LEADER | RAFT TERM | RAFT INDEX | +-----------------------------+------------------+---------+---------+-----------+-----------+------------+ | https://192.168.12.108:2379 | 2db31a5d67ec1034 | 3.5.1 | 7.4 MB | false | 4 | 72291872 | | https://192.168.12.107:2379 | 471323846709334f | 3.5.1 | 9.0 MB | false | 4 | 72291872 | | https://192.168.12.109:2379 | 42efe7cca897d765 | 3.5.1 | 9.2 MB | true | 4 | 72291872 | +-----------------------------+------------------+---------+---------+-----------+-----------+------------+

由于从 kube-apiserver 的日志中同样无法提取出能够帮助解决问题的有用信息,起初我们只能猜测可能是 kube-apiserver 的缓存更新异常导致的。正当我们要从这个切入点去解决问题时,该同事反馈了一个更诡异的问题——自己新创建的 Pod,通过 kubectl查询 Pod 列表,突然消失了!纳尼?这是什么骚操作?经过我们多次测试查询发现,通过 kubectl 来 list pod 列表,该 pod 有时候能查到,有时候查不到。那么问题来了,K8s api 的 list 操作是没有缓存的,数据是 kube-apiserver 直接从 etcd 拉取返回给客户端的,难道是 etcd 本身出了问题?

众所周知,etcd 本身是一个强一致性的 KV 存储,在写操作成功的情况下,两次读请求不应该读取到不一样的数据。怀着不信邪的态度,我们通过 etcdctl 直接查询了 etcd 集群状态和集群数据,返回结果显示 3 个节点状态都正常,且 RaftIndex 一致,观察 etcd 的日志也并未发现报错信息,唯一可疑的地方是 3 个节点的 dbsize 差别较大。接着,我们又将 client 访问的 endpoint 指定为不同节点地址来查询每个节点的 key 的数量,结果发现 3 个节点返回的 key 的数量不一致,甚至两个不同节点上 Key 的数量差最大可达到几千!而直接通过 etcdctl 查询刚才创建的 Pod,发现访问某些 endpoint 能够查询到该 pod,而访问其他 endpoint 则查不到。

至此,基本可以确定 etcd 集群的节点之间确实存在数据不一致现象。

解决思路

解决实践 : 此处将weiyigeek-109中的etcd数据备份恢复到weiyigeek-107与weiyigeek-108节点中。1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 etcdctl --endpoints=https://192.168.12.107:2379 snapshot save /backup/etcd-107-snapshot.db etcdctl --endpoints=https://192.168.12.108:2379 snapshot save /backup/etcd-108-snapshot.db etcdctl --endpoints=https://192.168.12.109:2379 snapshot save /backup/etcd-109-snapshot.db etcdctl --cacert=/etc/kubernetes/pki/etcd/ca.crt --cert=/etc/kubernetes/pki/etcd/peer.crt --key=/etc/kubernetes/pki/etcd/peer.key --endpoints=192.168.12.107:2379 move-leader 42efe7cca897d765 mv /var/lib/etcd /var/lib/etcd.bak tar -czvf etcd-20230308bak.taz.gz /var/lib/etcd $ etcdctl --cacert=/etc/kubernetes/pki/etcd/ca.crt --cert=/etc/kubernetes/pki/etcd/peer.crt --key=/etc/kubernetes/pki/etcd/peer.key --endpoints=192.168.12.109:2379 member list $ etcdctl --cacert=/etc/kubernetes/pki/etcd/ca.crt --cert=/etc/kubernetes/pki/etcd/peer.crt --key=/etc/kubernetes/pki/etcd/peer.key --endpoints=192.168.12.109:2379 member remove 2db31a5d67ec1034 $ mv /etc/kubernetes/manifests/etcd.yaml /etc/kubernetes/manifests-backup/etcd.yaml $ vim /etc/kubernetes/manifests-backup/etcd.yaml - --initial-cluster=weiyigeek-109=https://192.168.12.109:2380,weiyigeek-108=https://192.168.12.108:2380,weiyigeek-107=https://192.168.12.107:2380 - --initial-cluster-state=existing $ kubectl exec -it -n kube-system etcd-weiyigeek-107 sh $ export ETCDCTL_API=3 $ ETCD_ENDPOINTS=192.168.12.109:2379 $ etcdctl --cacert=/etc/kubernetes/pki/etcd/ca.crt --cert=/etc/kubernetes/pki/etcd/peer.crt --key=/etc/kubernetes/pki/etcd/peer.key --endpoints=$ETCD_ENDPOINTS member add weiyigeek-107 --peer-urls=https://192.168.12.107:2380 Member 8cecf1f50b91e502 added to cluster 71d4ff56f6fd6e70 mv /etc/kubernetes/manifests-backup/etcd.yaml /etc/kubernetes/manifests/etcd.yaml

验证结果 1 2 3 4 5 6 7 $ for count in {107..109};do ETCDCTL_API=3 etcdctl get / --prefix --keys-only --endpoints=https://192.168.12.${count} :2379 --cacert=/etc/kubernetes/pki/etcd/ca.crt --cert=/etc/kubernetes/pki/etcd/peer.crt --key=/etc/kubernetes/pki/etcd/peer.key | wc -l;done 1976 $ for count in {107..109};do ETCDCTL_API=3 etcdctl endpoint status --endpoints=https://192.168.12.${count} :2379 --cacert=/etc/kubernetes/pki/etcd/ca.crt --cert=/etc/kubernetes/pki/etcd/peer.crt --key=/etc/kubernetes/pki/etcd/peer.key ;done https://192.168.12.107:2379, 8cecf1f50b91e502, 3.5.1, 9.2 MB, false , 9, 72291872 https://192.168.12.108:2379, 35672f075108e36a, 3.5.1, 9.2 MB, false , 9, 72291872 https://192.168.12.109:2379, 42efe7cca897d765, 3.5.1, 9.2 MB, true , 9, 72291872

至此,K8S集群中etcd数据不一致解决与恢复完毕,希望大家多多支持!